The new atomic network clock sets a record for accuracy, deviating by only one second after 39.15 billion years.

Researchers have developed a clock with an error margin of approximately 8 tenths of a trillionth. This level of precision is so remarkable that it would take three times the age of the universe—39.15 billion years—for the clock to deviate by one second, as reported by IFL Science on March 29. During that time, the Sun could be born and die four times.

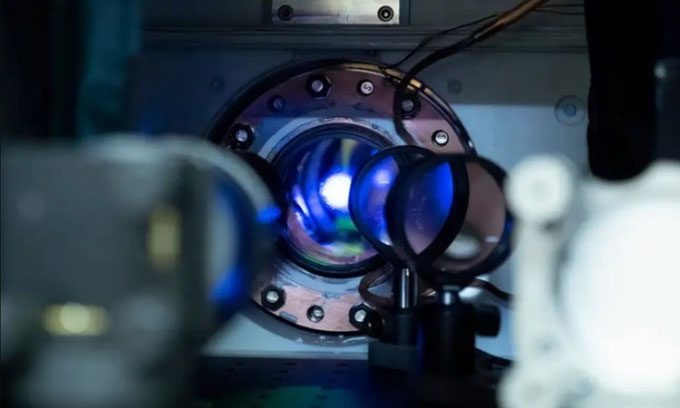

Optical atomic clock at the National Institute of Standards and Technology in the USA. (Photo: R. Jacobson)

This device is a type of optical crystal network clock, utilizing 40,000 strontium atoms trapped in a one-dimensional crystal network. The atoms are maintained at a temperature just above absolute zero, and each tick of the clock corresponds to a transition between energy levels of electrons within the atom.

The research team has been developing the optical atomic clock for many years, achieving a level of accuracy that conventional atomic clocks using cesium atoms cannot reach. However, over the past few years, they have been working to minimize the error margin and systematic influences to further enhance the device’s accuracy, according to lead researcher Alexander Aeppli from the University of Colorado Boulder. They hope to achieve a time measurement accuracy that is more than 10 times, or even 100 times, greater.

This type of clock will provide a new definition of a second, opening the door to new discoveries. Atomic clocks are highly sensitive to relativistic effects, but the sensitivity of the optical crystal network clock is over 1,000 times greater, meaning they could help measure gravitational forces with unprecedented detail and test the theory of general relativity. The research team has detailed the device in the Arxiv database.