A new report commissioned by the US government warns that artificial intelligence (AI) could pose a “threat to humanity at an extinction level,” necessitating control thresholds.

The report, collected by Time, is titled Action Plan to Enhance Safety and Security for Advanced AI, proposing a wide range of unprecedented actions and policies regarding artificial intelligence. If implemented, these could completely transform the AI industry.

“The development of advanced AI poses urgent and increasing risks to national security,” the report’s introduction states. “The rise of advanced AI and Artificial General Intelligence (AGI) could destabilize global security in ways that evoke memories of nuclear weapons use.”

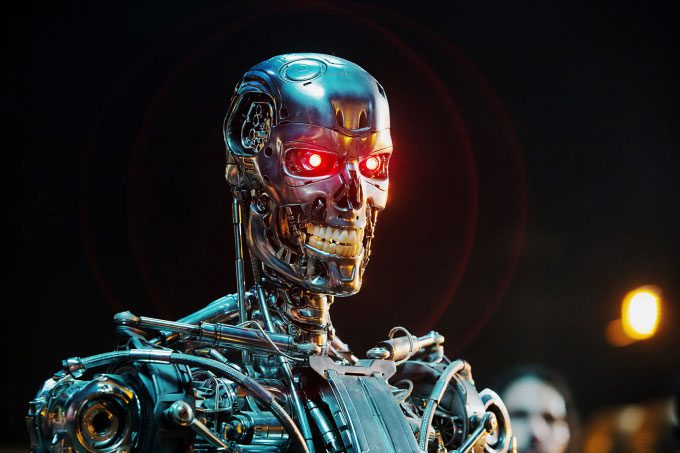

A robot from the movie “Terminator”. (Photo: Paramount).

Specifically, the report recommends that the US Congress consider it illegal to train AI models that exceed a specific computational power threshold, thus establishing a power threshold for AI tools, to be regulated by a federal AI agency. This agency would have the authority to require AI companies to seek government permission to train and deploy new models in accordance with the established threshold.

Controlling the training of advanced AI systems above a certain threshold would help “regulate” competition among AI developers, thereby contributing to reducing the pace of both hardware and software production races. Over time, the federal AI regulatory agency could raise the threshold and allow better AI system training when “it feels sufficiently safe,” or lower it when “hazards are detected” in AI models.

Additionally, the report suggests that authorities should “urgently” consider controlling the publication of content or internal activities within powerful AI models. Violations could result in imprisonment. Furthermore, the government should tighten controls on the production and export of AI chips while directing federal funding toward research to make advanced AI safer.

The report was produced by Gladstone AI, a company with four employees that specializes in organizing technical briefings on AI for government staff, under a contract worth $250,000 authorized by the US State Department in November 2022. The report was submitted as a 247-page document to the State Department on February 26.

The US State Department has not commented on the report.

According to records, the three authors investigated the issue by speaking with over 200 government members, experts, and staff from leading AI companies like OpenAI, Google DeepMind, Anthropic, and Meta. The detailed intervention plan was developed over 13 months. However, the first page of the report states that “the recommendations do not reflect the views of the State Department or the US government.”

Greg Allen, an expert from the Center for Strategic and International Studies (CSIS), noted that the proposals may face political challenges. “I think the recommendations are unlikely to be approved by the US government. Unless there is some ‘external shock,’ I think the current approach is unlikely to change.”

AI is currently experiencing explosive growth, with many models capable of performing tasks that were once the realm of science fiction. In light of this, many experts have raised warnings about the potential dangers. Last March, over 1,000 individuals, considered elite figures in the technology field, including billionaire Elon Musk and Apple co-founder Steve Wozniak, signed an open letter urging global companies and organizations to pause the AI race for six months to collaboratively build a common set of rules for this technology.

In mid-April 2023, Google CEO Sundar Pichai stated that AI kept him awake many nights due to its potential dangers, indicating that society is not prepared for the rapid development of AI.

A month later, a message signed by 350 leaders and experts in the AI field issued a similar warning. “Reducing the extinction risk posed by AI must be a global priority, alongside other societal risks such as pandemics or nuclear war,” the announcement from the Center for AI Safety (CAIS) in San Francisco stated on their website in May 2023.

A survey conducted by Stanford University in April 2023 also revealed that 56% of computer scientists and researchers believe generative AI will transition to AGI in the near future. Furthermore, 58% of AI experts consider AGI to be a “major concern”, while 36% believe this technology could lead to a “nuclear-level disaster.” Some indicate that AGI may represent what is called a “technological singularity” – an assumed point in the future when machines surpass human capabilities in an irreversible manner, posing a threat to civilization.

“The advancements in AI and AGI models over the past few years have been astounding,” said DeepMind CEO Demis Hassabis to Fortune. “I see no reason for that progress to slow down. We have only a few years, or at most a decade, to prepare.”