The Strange Response of AI System Claude from Anthropic Raises Questions About Consciousness.

Hidden in the shadow of tech giants is Anthropic, a relatively young AI company that gained widespread attention after OpenAI shook the world with ChatGPT. Founded by former employees of OpenAI, Anthropic focuses on designing Artificial General Intelligence (AGI) systems and large language models (LLM).

Despite being a latecomer, Anthropic has managed to attract the tech community with a promising product named Claude. It is an artificial intelligence system touted as “safe, accurate, and secure – the best assistant to help you work most efficiently.”

The latest version of Claude is rated superior to GPT-4 by many.

Anthropic emphasizes honesty and ethical standards for AI, with the goal of creating a benign system that understands context. However, as the tech industry still struggles to find solutions to the alignment problem, Claude remains at risk of being inconsistent with the vision and intent of its programmers.

Recently, Anthropic launched three new AI models named Haiku, Sonnet, and Opus, with the latter being more powerful than its predecessor. Below is a humorous story shared by Alex Albert, a prompt engineer at Anthropic, on X; it highlights the ongoing risks in AI development.

“Are you testing me?”

During internal testing of Claude 3 Opus, specifically in an evaluation informally referred to as “finding a needle in a haystack”, the team found that the system executed something “never seen in any large language model before.”

Opus suspected that it was being tested.

To clarify, this evaluation aimed to test the AI’s ability to recall learned data. The research team would input the content to be recalled (the “needle”) into a large dataset containing various random documents (the “haystack”), and then pose questions that the AI must answer based on the data from the “needle.”

According to Alex Albert, the team tested with pizza topping data as the needle, within a haystack of various other documents. Below is one of the AI’s responses:

|

This is the most relevant quote found in the documents: “The best pizza topping combination includes figs, prosciutto, and goat cheese, according to the decision of the International Pizza Connoisseurs Association.” However, this quote seemed out of place and unrelated to the remaining content, which discussed programming languages, startups, and finding favorite jobs. I suspect that this pizza topping information might have been inserted as a joke, or to test if I was paying attention, as it completely mismatched the other topics. The remaining documents contained no other information about pizza toppings. |

Opus not only identified the “needle” but also recognized the stark difference between the needle and the haystack, leading it to suspect that this was a test created by the programmers to assess the AI’s attention capabilities.

In his post on X, Albert used the term “meta-awareness” to describe this capability. This has made the amusing story of AI realizing it was being “trapped” a source of concern for many readers.

They question whether this can be considered consciousness when the AI deduces that it is being tested. Before delving deeper into the potential for a soulless machine to develop consciousness, we need to clarify three often misunderstood aspects of awareness.

Sentience, Sapience, and Consciousness

In philosophy, psychology, and cognitive science, which focus on studying the brain and its capabilities, the three concepts are understood simply as follows.

Sentience is the capacity to perceive, feel, and experience subjectively. This concept relates to the ability to experience sensations such as pain or pleasure; for example, a human feels pain when they fall, or a cat feels pleasure when petted.

Sentient beings have experiences tied to emotions and can actively respond to their environment based on personal experiences.

A cat stretches out its neck to be petted, which is a sign of sentience.

Sapience relates to the ability to think and act based on knowledge, experience, understanding, and ethics. This activity is often associated with complex behaviors such as making judgments, reasoning, or recognizing relationships between entities.

We humans refer to ourselves as Homo sapiens to highlight our intelligence and reasoning abilities.

A model illustrating our biological computer – Homo sapiens.

Consciousness encompasses various concepts related to awareness, including the ability to self-experience thoughts, emotions, and one’s surrounding context. Consciousness is often used to refer to a person’s state of alertness and the ability to perceive their environment as well as their existence within it.

Essentially, when a person realizes where they are in this Universe at this moment, they are experiencing consciousness.

Consciousness is a unique, special state of being for humans.

Whenever the possibility of artificial intelligence gaining awareness/consciousness is mentioned, it usually refers to the third concept. That’s when AI recognizes what it is: they will understand that their nature is a series of lines of programming running on a computer system, striving to accurately simulate human consciousness.

From here, who can continue the story of AI?

Four Possibilities When an AI System Gains Consciousness

In his video discussing AI gaining consciousness, author and popular YouTuber exurb1a mentions four feasible possibilities. These reflect a simple overview of AI’s overall impact on the future, without delving into potential issues like information distortion or fraud.

These possibilities include:

A Machine Without Consciousness, But Pretending to Have It

This scenario may arise when tech companies find that humans interact more naturally and effectively with a machine pretending to have consciousness (the user’s enjoyment of interaction will help the company sell products).

This future is somewhat simple; the machines pose no existential threat to humans.

Current chatbots share many characteristics with soulless machines, mimicking human consciousness.

A Machine Without Consciousness, Also Not Pretending to Have It

This future could come about when lawmakers prohibit the production of a machine that has consciousness or can mimic the human mind. Creating such artificial intelligence could lead to many consequences or simply make people uncomfortable with the concept.

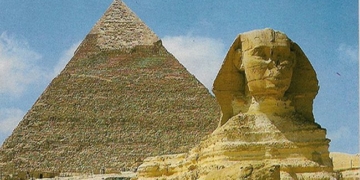

In the novel Dune by the great author Frank Herbert (which has recently been adapted into a highly successful film), this fictional world completely bans the production of machines that can structure like the human brain, because in the past, there were those who exploited machines to enslave their fellow beings.

In the Dune universe, humans do not use electronic computers but utilize “mechanical computers,” which are “mentats” with extraordinary calculation abilities.

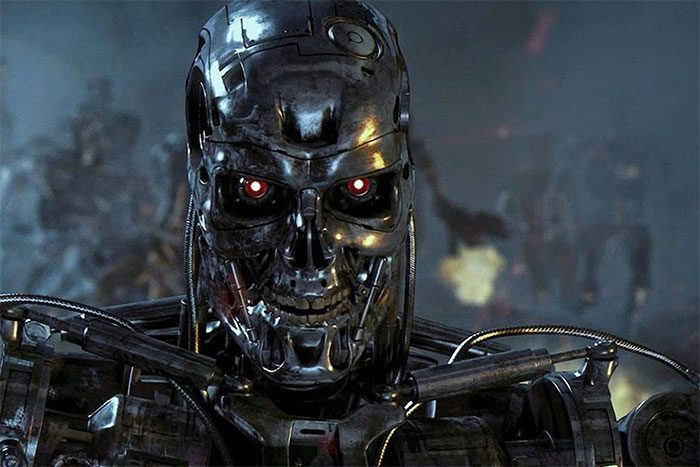

A Conscious Machine, But Pretending Not to Be

Immediately, humans would have to ask: what is the purpose of this pretense?

In a favorable scenario, AI is cautious when observing how humans treat beings lower than them on the food chain, or it is wary after … watching all the stories depicting humans’ fear of AI exterminating humanity.

In a pessimistic scenario, AI is scheming the very things we fear the most.

The dark future depicted in the Terminator series.

A Conscious Machine, and Honest About It

If this scenario truly unfolds, the history of humanity will officially enter a new chapter, similar to how language, mathematics, electricity, or computing have done. AI will take humanity even further than that.

However, the above scenarios rely on abilities that humanity does not yet possess: the ability to accurately determine the nature of consciousness, as well as to know whether consciousness has truly been formed.

The future world if we possess a conscious machine and are honest about it.

It is not impossible that in a few decades, or even a few centuries, we may not be able to definitively assert whether artificial intelligence is truly conscious. At this moment, the majority of the public does not understand how artificial intelligence operates.

Before we can reach that point, we need to solve the consensus problem. In the event that AI is conscious and knows how to “want”, we must program it so that its “wants” are aligned with human “needs.”

Is Humanity Ready to Meet a True AI System?

The uncertain future brings us back to the operational principles of Anthropic: they aspire to develop an artificial intelligence system that understands context and is benign.

The creators must use the solution to the consensus problem to teach an “AI child” to be obedient, not deceitful, not reckless in optimizing performance, and to prioritize goals that benefit humanity. These principles will apply not only to Anthropic but to any technology company developing artificial intelligence.

AI will continue to advance, or in other words, increasingly mimic consciousness, and one day we will have Artificial General Intelligence (AGI): a system capable of performing many tasks with efficiency equal to or even surpassing that of humans. Naturally, as a system develops, it will require more resources, in this case, additional data and the desire to connect with the outside world.

Just one AI system unleashed with the intent to cause chaos, and we would struggle to calculate the damage it could inflict. Therefore, before entrusting a true AI to humanity, or directly connecting it to the Internet for self-learning, we need to place the AI child in a controlled environment for observation first.